The Two Guiding Principles for Data Quality in Digital Analytics

Sep 19, 2012

Sherlock Holmes once exclaimed, “Data! Data! Data! I can’t make bricks without clay.” The famous fictional detective couldn’t form any hypotheses or draw any conclusions until he had sufficient data. As digital analysts, data-driven marketers, or business decision-makers, we are equally dependent upon the digital clay that forms our optimization bricks. You too should cry “Data! Data! Data!” if you don’t have the numbers you need to understand and manage your online business (even if your outburst just serves as a cathartic release).

In web analytics, you need to have a solid foundation of data as it underpins everything. Your digital data is the basic building block of reports, analyses, business decisions, and successful optimizations. This foundation doesn’t happen on its own as it takes careful planning and execution. Your data also needs to be managed and maintained over time—no you can’t “set and forget.” Your digital data will never be perfect—just like many other forms of data it can get messy. You strive to manage what you can control internally (code changes, content updates, campaign tracking codes, URL redirects, etc.) and then monitor and respond appropriately to external issues that you can’t necessarily control (browser limitations, search engine changes, cookie deletion, bots, etc.). You can’t afford to have your organization’s confidence in its numbers erode over time as it can have several negative repercussions:

Rather than focusing on value-generating activities such as analysis, testing, or helping with implementing site enhancements, your digital analysts spend their time battling fire drills as they investigate and explain discrepancies in the data.

Digital analysts and data-driven marketers waste time working around problems or gaps in the data, having to do overly complex reporting or analysis.

Different business groups break apart into separate data fiefdoms as they build their own data sets to answer their unique business questions. They end up using whatever analytics tool and approach that meets their individual needs, which may be contrary to the needs of the overall enterprise and other teams.

Business teams abandon using their digital data as they question its validity. They end up ignoring the data and “flying blind.” These teams revert to familiar, gut-driven habits and have no idea how their initiatives are performing (I’m not making this up – I’ve seen this exact scenario happen at very large organizations).

Companies become paralyzed and fail to act on any insights in their data because they are unsure if the opportunities or problems are real. Doing nothing is viewed as safer than potentially making mistakes based on bad data.

Regardless of whether you create, share, or consume data, you should be concerned about the quality of your digital data within your organization. Experts in different IT fields have identified several dimensions that influence data quality, and these same criteria can certainly be used to evaluate your current digital data:

Accuracy: How closely does your data represent what really happened on your website, campaign, or mobile app? How inline or askew from the accepted values is it?

Consistency: How uniform or erratic is your data over time? Are you capturing a lot of strange or unexpected values?

Relevance: When you have questions about your website or online campaigns can you turn to your web analytics tool for insights? Does your digital data satisfy the needs of your key stakeholders?

Completeness: Do you have data on all of the areas of your digital business that you need to measure and understand? Is there anything substantially missing from your digital data that weakens your ability to use and apply it as widely as you’d like?

Timeliness: Is there a delay between when you get your digital data (in a usable form) and when you need to act upon it?

I’ve cherry-picked the factors that I felt were relevant to web analytics, but there are other dimensions mentioned by these experts such as interpretability, accessibility, coherence, validity, auditability, conciseness, objectivity, reliability, comparability, clarity, etc. However, I actually want to take a less academic and less complicated approach to data quality where we view data from a straightforward business perspective.

The Two Guiding Principles for Data Quality

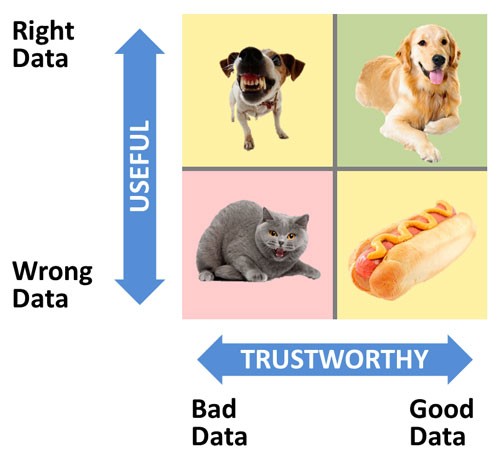

In my mind, the two key principles for data quality are usefulness and trust. Most business executives have two main questions on their mind when evaluating their digital data. How useful is my data? Do I trust it? The more your digital data is seen as being useful and trustworthy, the more its value increases in the eyes of your executives and their teams, which is important if you’re trying to foster a data-oriented environment.

Okay I had a bit of fun with this matrix but hopefully it conveys the importance of having both useful and trustworthy data (or dogs).

Principle #1: Usefulness (Right or Wrong Data?)

The usefulness of your digital data is defined by its intended purpose. For managers, the data needs to be able to answer questions they have about their key strategic initiatives. For this reason, it’s important to have a clear understanding of your online business strategy and key business objectives. If your data is supposed to help measure and optimize your firm’s digital performance, it’s imperative that your implementation is aligned with your company’s business goals and digital strategy. Typically, when there’s misalignment, your data will be perceived as being less useful. For some demanding managers, it may be more black-and-white—it’s the difference between having the right data and the wrong data to manage their part of the business.

It’s important to remember your digital initiatives (websites, campaigns, apps, etc.) are not static and, therefore, your analytics implementation can’t be static either. Your organization should be in the practice of constantly fine-tuning and calibrating its data to the evolving needs of your business. If digital analytics is treated as an afterthought and not integrated into the web development process, you’re going to run into issues where your sites and content are changing but your digital analytics tool will be stuck measuring and reporting on what was important in the past—not what’s important today!

The usefulness factor is more than just relevance as you can have relevant data that isn’t necessarily useful. To use a baseball analogy, relevant data is in the right ballpark while useful data is in the strike zone. With useful data there’s a higher degree of calibration to the needs of the business. The out-of-the-box reporting in most web analytics tools will provide some relevant information. However, I’ve found the most compelling and useful reports are typically those that are customized or tailored to answer specific business questions. Useful data is exponentially more actionable because it sheds light on critical business initiatives that demand action.

Timeliness is another key attribute of useful data. Data can have a shelf life and become less and less useful over time. In most cases with digital analytics, you’re dealing with real-time or near real-time data so timeliness isn’t typically an issue. However, that assumes you have what you need directly out of your analytics reports, and it doesn’t need to be massaged in any way, which can lead to delays. Finally, if your data is incomplete then it’s going to be less useful than if it covered the entire picture of what needed to be measured and understood.

Principle #2: Trust (Good or Bad Data?)

The usefulness principle always comes before the trust principle because it doesn’t matter how much you trust your data if it’s not useful to begin with. However, trust is also essential because no manager will act upon data they don’t trust. In my view, trust is a factor of both accuracy and consistency. Data accuracy is when your data matches its actual true value. This begs the question of what is the “actual true value,” and where can it be found? To validate your digital data’s accuracy it helps if you have a system that has the correct numbers, which can serve as a benchmark for your web analytics data. For example, you might compare your data against the numbers in another web analytics tool, reports in another digital marketing tool, or data from a backend, transactional system.

Just because two sets of data don’t match, doesn’t mean one is correct and the other is wrong (or vice versa). It’s important to understand how various systems collect and process data differently so that you can separate real data discrepancies from the subtle nuances of each system. Typically, you want to see the numbers moving in the same direction and staying within an accepted threshold of variance even though the results may not match up directly.

You need to be careful to establish one source of truth for your digital data even though you may have data in a variety of other tools. Periodically checking the data accuracy is one thing, but constantly questioning the numbers and comparing the same results across multiple tools can waste time that could be better spent on optimizing your digital business. In addition, organizations can fall into the trap of needing to have their digital data match exactly. While you might not want your digital data to vary more than a certain percentage from another system’s data, you’ll expend a lot of effort and realize diminishing returns as you strive to remove all margin of error. You can’t afford to become immobilized or entangled by minor imperfections in your data. If your data is off 5-10%, you should consider your data to be “good” and start using it to analyze and optimize your digital initiatives.

Often, there isn’t another system that you can use to benchmark your digital data’s accuracy. In these cases, you need reassure people that they can trust the numbers by validating your analytics tagging was done correctly. Consistency becomes important as digital analytics can be susceptible to data inconsistencies, which can erode people’s trust if the issues are not minimized or explained. Overall, your data may be very accurate, but unusual glitches can throw all of your data into question if you’re not careful.

Having well-defined processes around deploying new page tags will help to decrease internally-generated discrepancies. For other external factors that impact your data, your analytics team needs to be adept at spotting, investigating, and responding to them quickly—which may mean putting a technical workaround in place to prevent future occurrences (if possible) and explaining to stakeholders why the irregularity occurred in the first place. Educating data users on consistency issues can help them to avoid poor practices that lead to bad data and also be more understanding when uncontrollable anomalies appear in your digital data. Maintaining the integrity of your digital data is like managing a garden; it’s something that requires constant attention. If your implementation becomes overrun by weeds, you may lose your entire crop of useful data. All the hard work that went into planning and planting the crop will be wasted, and there won’t be a bountiful harvest of meaningful insights and action.

However, every garden has a few weeds and that can’t stop you from using your digital data. Some of your reports may not be as clean or complete as you’d like, but that doesn’t stop you gleaning useful insights from them. I agree with Avinash Kaushik who insisted that we acknowledge our data isn’t perfect, but we get over it and use the data as best as we can. That doesn’t mean you shouldn’t try to avoid or mitigate wrong/bad data, just your focus should be on trying to use your current data as much as possible rather than waiting for perfection. It can be frustrating when a key report appears to be broken, but it’s only in rare situations where all your data is completely unusable.

Tips for Improving Data Quality

When you’re evaluating your data quality, you might discover you have both usefulness and trust issues. I’ve identified several tips that help you to overcome these issues. Some of the following tips apply to one of the two principles while others span both guiding principles.

Iterative process: Creating useful data is an iterative process where you may not nail the desired insights perfectly the first time. You may need to start somewhere and then keep refining the data until your data shifts from being relevant to essential. Keep asking the question of your business stakeholders, “what would make the digital analytics reports even more valuable?”

Analysis isolates shortcomings: Performing analysis on your data is a great way to identify data quality problems both from a usefulness and a trust perspective. When you actually dive in and take a close look at the data to answer real business questions, you see issues that couldn’t be spotted if you’re just scanning reports or dashboards. Just like test driving a used car, you don’t notice what’s wrong or missing until you’re actually in the driver’s seat on the open road.

Feedback loops:One of the dangers in digital analytics is to set up the reporting and jump to the next challenge without soliciting feedback from internal customers. Whenever possible and where appropriate, digital analysts should be following up with internal stakeholders on their reports to verify if they are useful and trusted.

More accountability for tagging: Organizations should hold business teams accountable for their results, but they should also hold them accountable for adhering to internal tagging processes and standards, which ensure digital initiatives are tagged correctly. Missing tags or poorly executed tracking hurts your company’s ability to manage its performance and should not be tolerated.

Early warning system: Dashboards and automated alerts can be configured to catch and highlight potential data issues. These tools can serve as early warning systems so that the digital analytics team can investigate and resolve/explain an issue before it becomes a serious disruption to the business.

Offsite audit: Analytics teams should make time to audit their reports on a recurring basis (quarterly, semi-annually?)—potentially as part of an offsite team event. If you don’t make time to review your reports on a regular basis, it won’t happen. It’s a great way to review the digital measurement strategy and re-calibrate reports that are not implemented correctly or no longer relevant.

Auditing tool: To complement your human-led audits, you may want to leverage a site scan or tag auditing tool such as Adobe DigitalPulse, ObservePoint, or Accenture Digital Diagnostics (formerly Maxamine). These tools can detect missing or incorrectly configured tags that can impact your data quality.

As the detective Sherlock Holmes recognized, data is essential to solving problems and unlocking insights. For modern digital detectives, data is the foundation that either limits your ability to optimize your digital business or empowers you to take it to the next level. The more useful and trustworthy your digital data is, the more impact it will have on your organization. However, remember you can’t afford to wait for perfect data as you’ll pass up valuable optimization opportunities in your digital data you can seize today.